Leveraging Assessment to Drive Quality in Business Education

Sponsored Content

Higher education equips learners with the 21st-century competencies needed to be successful in our knowledge-based economy. Continual improvement to the ways institutions prepare learners to be successful is an integral part of this process; global societies' betterment depends on it.

One key area related to quality in higher education is accreditation. Accreditation provides a framework for elevating quality, aligning goals with results, and ensuring that the institution meets all of its stakeholders' expectations.

The assessment of learning is essential to improving quality and has rightly become an critical part of the accreditation process. Accreditation agencies require programmatic assessment to encourage the continuous quality improvement of academic programs. By evaluating assessment data, trends emerge that enable a clearer understanding of the degree to which learning is taking place. Even more, assessment is necessary to “close the loop”—a critical component to the assurance of learning process. Closing the loop means analyzing assessment results, using the results to make program changes that enhance learning, and reassessing outcomes to determine the impact of those changes.

The Assessment and Sample

Given that schools are expected to have the assessment results needed to drive quality, it is vital that educators understand whether this effort has an impact on quality. Peregrine Global Services analyzed 10 years of programmatic assessment data by examining assessment results using aggregate analysis.

The sample included 2,602 business programs and 511,143 business administration exams that directly measured retained knowledge in key business topics. Exams included those administered at the beginning of a program (inbound exam) and at the end of a program (outbound exam). We further used the exams to assess retained knowledge at the associate, bachelor’s, master’s, and doctoral degree levels. The large dataset allowed for a variety of statistical analysis and evaluation approaches.

The exams were administered as online, randomized question selection exams. Peregrine’s exams have unique security features and are not required to be proctored unless the institution administering the exam wishes to do so. Additionally, we captured the program's modality (traditional, online, or blended) to understand how quality improvement and retained knowledge are impacted by the learning environment. Traditional programs refer to academic programs delivered at a campus location; online programs refer to programs that are nearly or completely completed online, and blended programs utilize a combination of online and campus-based instruction.

Understanding Delivery Modality

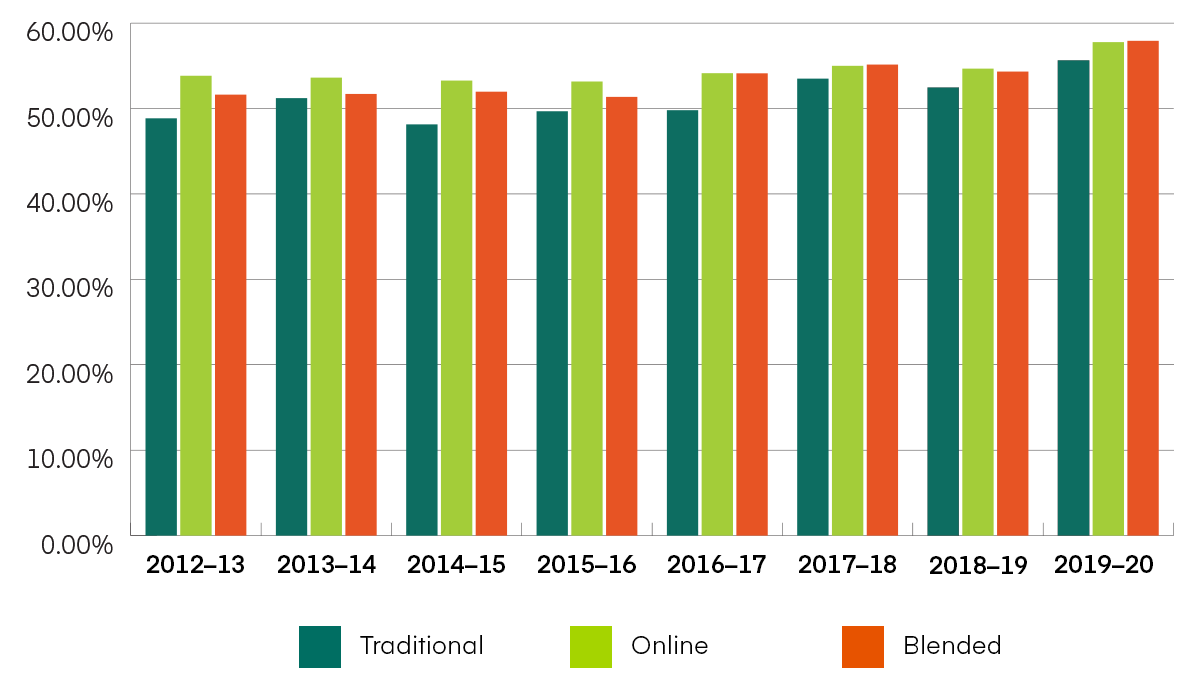

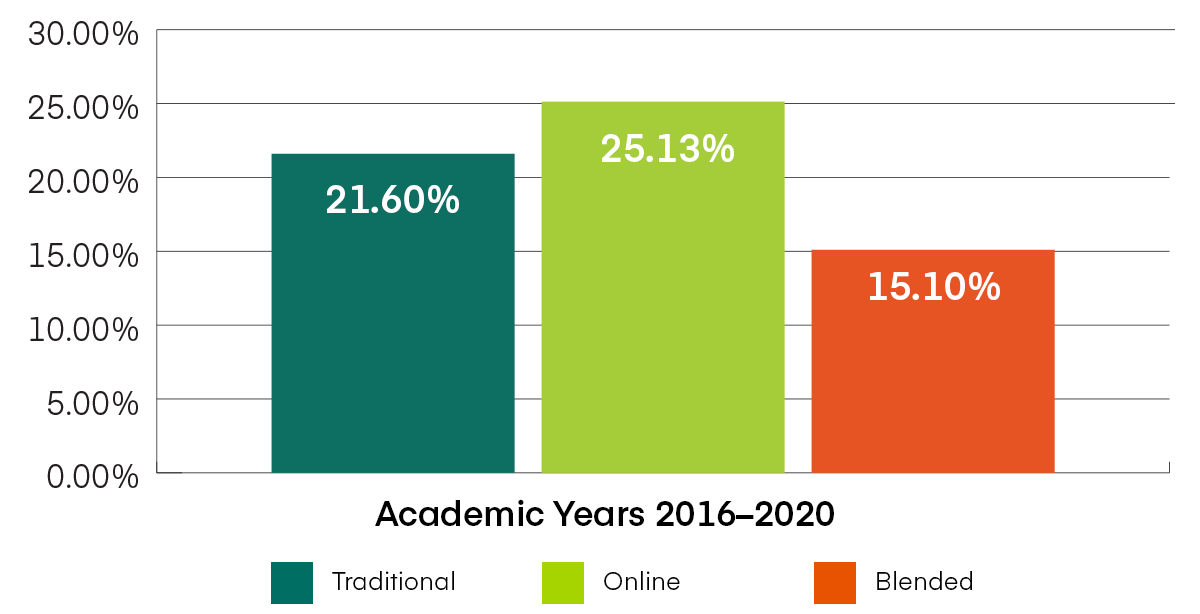

The delivery modality showed differences in retained knowledge at the end of a program. According to the data analysis, students in online and blended programs outperformed those in traditional programs at the bachelor’s level (Figure 1). A possible reason for this outcome is that students studying online or in a blended program are more likely to be a nontraditional age and begin the program with foundational business knowledge gained while working in industry.

According to data from U.S. News and World Report, of 227 online bachelor’s degree programs, the average learner age was 32 in 2015-16. Given the recent accelerated transition to online learning caused by the COVID-19 pandemic, student demographics related to delivery modality, as well as trends in retained knowledge, are likely to continue changing.

Figure 1. Business Administration Assessment Results: Bachelor’s—Total Exam Means

Exam totals for business bachelor’s degree program outbound exams for the Business Administration (BUS) assessment service from academic year 2012-13 through 2019-20, by delivery modality.

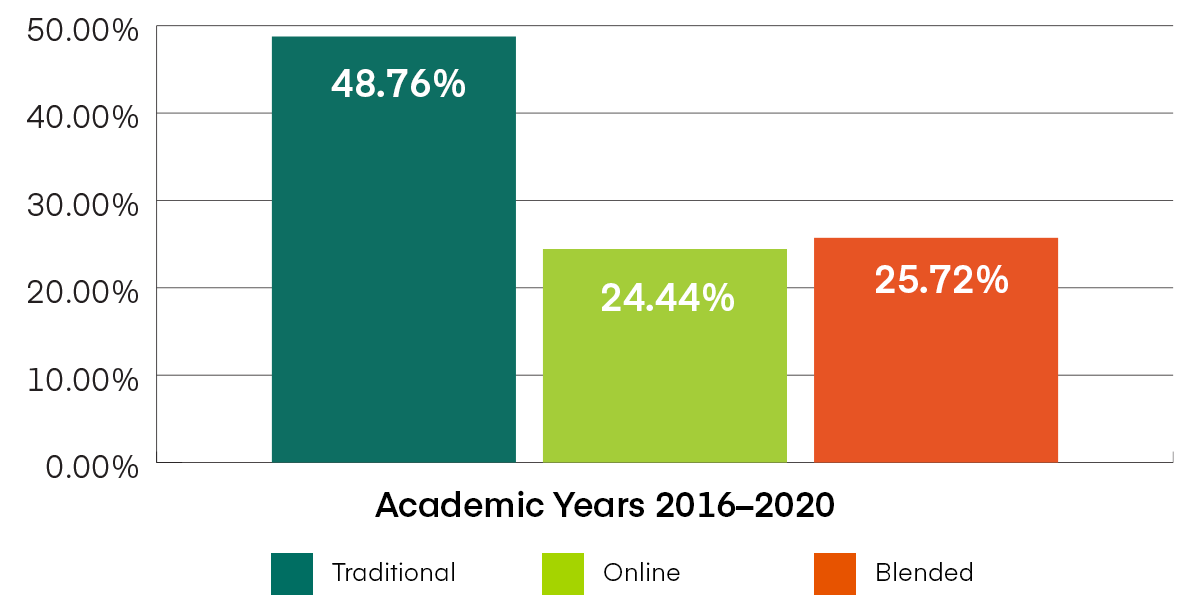

Although online and blended learners scored higher on the outbound exam, the growth in knowledge gained between inbound and outbound exams illustrates that traditional learners experienced the most significant growth, at 48.78 percent, compared to learners in online and blended programs, who experienced 24.44 percent and 25.72 percent growth, respectively (Figure 2). This reinforces the likelihood that learners in online and blended programs bring knowledge from prior experience.

Figure 2. Business Administration Assessment Results: Bachelor’s—Percentage Change

Bachelor’s-level percentage change (knowledge gain) for Business Administration (BUS) assessment service for the past four academic years, by delivery modality. Percentage change is the difference between the inbound and outbound exam scores.

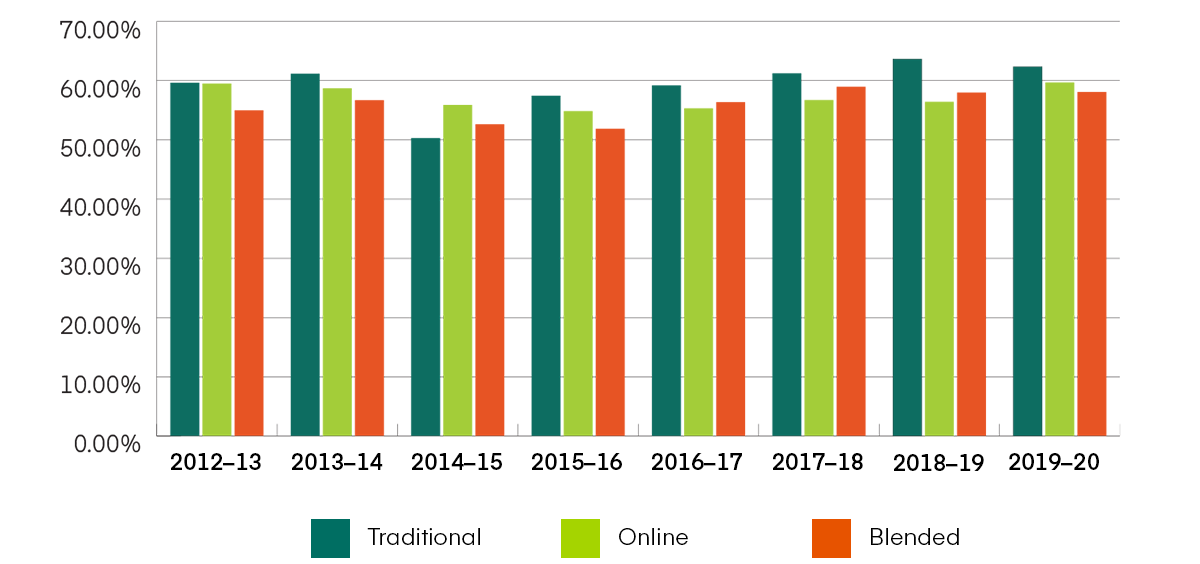

The master’s-level assessment showed very different results in how modality impacts retained knowledge. Students in traditional programs outperformed those in online and blended programs on the outbound assessment (Figure 3). Learner demographics might help explain this difference. Graduate students in traditional programs are more likely to move into a graduate program immediately after completing their undergraduate education, while learners in online and blended programs are more likely to return to school after taking time off to join the workforce.

Figure 3. Business Administration Results: Master’s—Exam Total Means

Exam totals for business master’s degree programs’ outbound exams for the Business Administration (BUS) assessment service from academic year 2012-13 through 2019-20, by delivery modality.

Much like we saw in the analysis of bachelor’s-level exams, the master’s students who scored lower on the outbound assessment also experienced the most growth during their programs. Learners in online master’s programs showed the highest percentage change from inbound to outbound scores, followed by learners in traditional programs, and then those in blended programs (Figure 4).

Figure 4. Business Administration Assessment Results: Master’s—Percentage Change

Master’s-level percentage change (knowledge gain) for Business Administration (BUS) assessment service for the past four academic years, by delivery modality. Percentage change is the difference between the inbound and outbound exam scores.

While exam results do show differences between delivery modalities, which likely are due to differences in student demographics, the data also reveal that each delivery modality can provide quality education. The modality of learning does not necessarily impact learning outcomes as much as the demographics and experience of the institutions' learners. Even more, Figures 1 and 2 show that, as time progresses, the differences in exam results between each modality decrease, and retained knowledge generally increases with time. The heightened movement toward digital transformation and innovations has enhanced the student experience, which help explain these trends.

Trends Over Time

When we began analyzing the assessment data, we asked the questions, “Does quality improve over time with the use of assessment? What trends, if any, exist for schools that have conducted programmatic assessments for at least six years?”

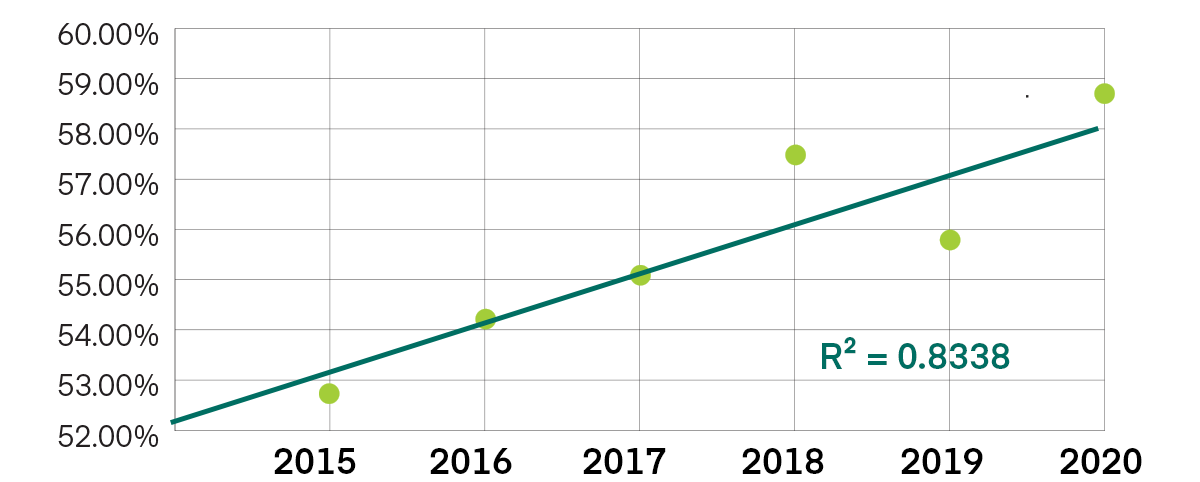

To understand the potential impact of assessment on bachelor’s and master’s degree program quality, we analyzed outbound exam results across eight exam topics for the past six years. The six-year range allowed us to work with a large sample sized that encompassed multiple groups of students.

The sample we used to analyze the bachelor’s-level programs included seven schools and 914 exams for 2014-15; 7,579 exams for 2015-16; 7,838 exams for 2016-17; 7,538 for 2017-18; 8,027 exams for 2018-19; and 8,476 exams for 2019-20. Figure 5 shows the exam scores plotted with a trend line and R2 value.

Figure 5. Bachelor’s Outbound Total Exam Score

Combined bachelor’s outbound exam data (n=7 schools), where all of eight topics were included on the exam from academic year 2014-15 through 2019-20. All schools used the same exam for each of the six academic years.

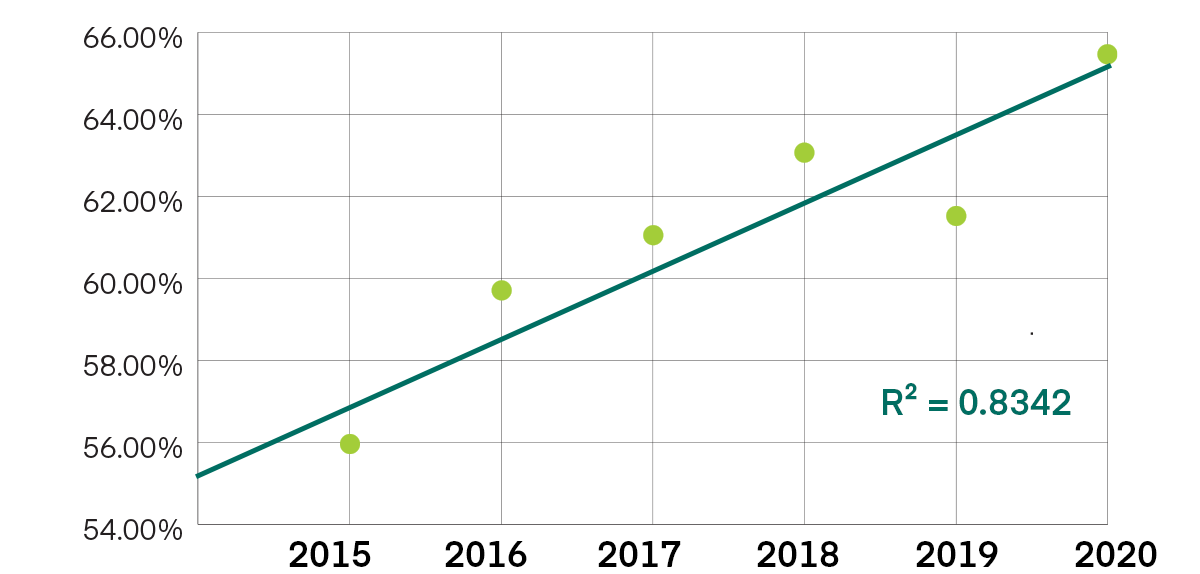

To evaluate assessment impact at the graduate level, we looked at outbound exam results from two schools, covering the past six years. The combined samples for the two schools included 1,236 exams for 2014-15; 1,343 exams for 2015-16; 1,367 exams for 2016-17; 1,130 for 2017-18; 1,140 exams for 2018-19; and 1,144 exams for 2019-20. Figure 6 shows the exam scores plotted with a trend line and R2 value.

Figure 6. Master’s Outbound Total Exam Score

Combined master’s outbound exam data (n=2 schools), where all of seven exam topics were included on the exam from academic year 2014-15 through 2019-20. All schools used the same exam for each of the six academic years.

Our findings demonstrate that program quality, as seen in trends of the outbound exam scores, increases over time. The increase is due to a combination of factors in five case studies that are explored in the white paper, “The Use of Summative Assessment to Improve Quality in Business Administration Programs.” The case studies reveal how using a comprehensive assessment solution and establishing a culture of quality aids in the assurance of learning process.

Assessment Impacts Quality

Assessing student learning within a program is one of the primary objectives of education. When done well, students thrive, programs become more and more innovative, and the educational institution better responds to its stakeholders. Thus, a robust assessment process, at the program level, is critical to student and institutional success.

The key with any assessment plan is to identify what the program wants the learners to know and be able to do, and to define learning objectives that are in line with the demands of the current economy and the industries to which graduates are expected to contribute. Choosing an assessment tool that accurately measures learning objectives is critical for closing the loop.

Download the full white paper, “The Use of Summative Assessment to Improve Quality in Business Administration Programs.”